Your Robot Learned to Push — Can It Slide?

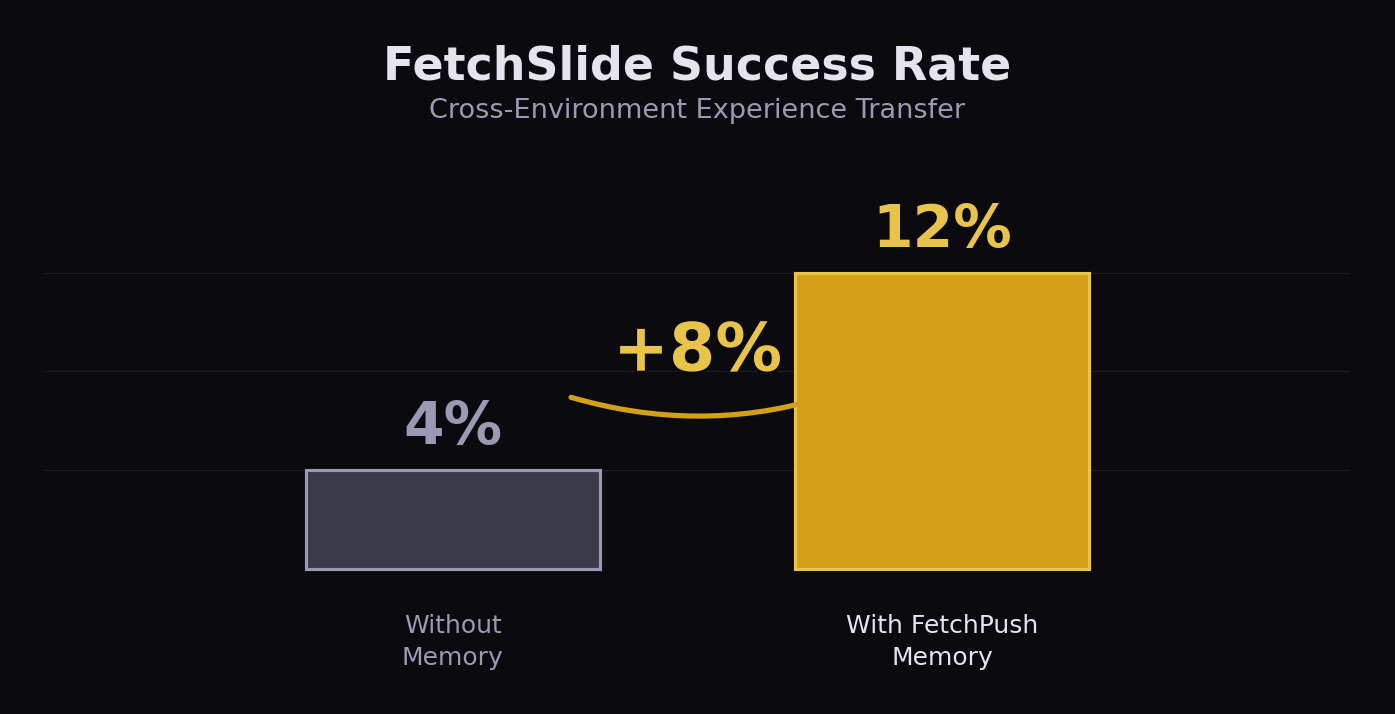

We did an experiment: train a robot to push objects in MuJoCo FetchPush, then drop it into FetchSlide — an environment it has never seen. Baseline success rate: 4%. With memory from the wrong task: 12%.

No model fine-tuning. No architecture changes. The robot used experience from a completely different task to perform better in a new one.

This shouldn't work. Pushing and sliding are different skills — different dynamics, different force profiles, different success criteria. But it did work. Here's why.

Why This Matters

Transfer learning is a well-studied problem: take a model trained on Task A and fine-tune it for Task B. It works, but it requires retraining — gradient updates, frozen layers, domain adaptation.

What we did is different. We didn't transfer model weights. We transferred memories.

The robot in FetchPush learned things like: "approaching from angle 0.34 with peak force 12.5N works for targets at distance 0.15m." These are experience parameters — not tied to a specific task, but to the physics of robotic manipulation.

FetchPush and FetchSlide share the same robot (7-DOF Fetch arm), similar workspace geometry, and overlapping physical constraints — distance estimation, force calibration, approach strategy. The memories don't store "how to push." They store "what physical parameters worked in this spatial context."

That's why a pushing memory can help with sliding.

The Experiment

We used robotmem to store and retrieve experience across environments. Three phases, 50 episodes each:

- Phase A — FetchSlide with a heuristic policy. No memory at all. 4% success.

- Phase B — Same FetchSlide task, but before each episode, the robot recalls experiences from its FetchPush memory. It uses retrieved parameters (force, angle, approach distance) to bias its policy. 12% success.

- Phase C — FetchSlide with its own accumulated FetchSlide memories. Higher still, but the cross-environment jump from 4% to 12% is the headline.

FetchSlide success rate. Left: no memory (4%). Right: with FetchPush memory (12%). +8 percentage points from cross-environment experience transfer.

+8 percentage points doesn't sound like much — until you consider what's happening. The robot has zero training in FetchSlide. It's using memories from a different task, with different dynamics, to improve on Day 1. This is experience transfer, not transfer learning.

The Code

The key insight is in how memories are stored and recalled. When the FetchPush robot succeeds, it stores the physical parameters with structured context:

from robotmem import learn, recall

# After a successful push (in FetchPush)

learn(

insight="FetchPush: success, dist 0.012m, 28 steps, force=12.5N, angle=0.34",

context='{"task": {"success": true}, "spatial": {"target": [1.3, 0.7, 0.42]}, "params": {"force_peak": 12.5, "approach_angle": 0.34}}'

)

# In FetchSlide: recall successful FetchPush experiences

memories = recall(

"push object toward target",

context_filter={"task.success": True},

spatial_sort={"field": "spatial.target", "target": slide_target}

)The context_filter ensures we only retrieve successes. The spatial_sort ranks memories by proximity to the current target — physically nearby experiences are more relevant than distant ones.

The retrieved parameters (force, angle, approach distance) are blended into the FetchSlide policy as initial biases. The robot doesn't blindly copy — it uses past experience as a starting point and adapts.

Reproduce It Yourself

git clone https://github.com/robotmem/robotmem

cd robotmem

pip install -e . gymnasium-robotics

cd examples/fetch_push

PYTHONPATH=../../src python experiment.py # Build FetchPush memory first

PYTHONPATH=../../src python cross_env.py # Test cross-environment transfer

# Output: Phase A 4% → Phase B 12% (+8%)The cross-environment experiment requires FetchPush memory from experiment.py first (~5 min), then runs 150 FetchSlide episodes (~10 min). You'll see the improvement in the terminal output.

Give Your Robot Cross-Environment Memory

Learn once, use everywhere. Open source, pure CPU.